So the other day I attended a workshop on Networking Security held in my college. After returning home, I naturally logged in to my Facebook account and navigated to the official page of the society that had organized the event, to look for any good photos of myself and when I did find one, I clicked on the tag button to, well… tag myself.

I was surprised to see how accurately Facebook recognized my face in a crowd full of people, that too in a not so high-res image. I went on scrolling through more photos and the same thing happened. Not only me, but I got suggested to tag my friends’ faces when even I couldn’t recognize them myself! I was awestruck, but I was impressed.

I had read about these technologies that can recognize if an object in an image is a face, have been around for quite a while, but the level of accuracy got me intrigued. A handful of Google search results later, and I found out about a new facial recognition technology called – “Deep Face”. I wish it was called “Derp Face”, but that’s probably why I am not a product manager there. *sigh*

SO WHAT IS DEEP FACE, AND IS IT AS OMINOUS AS IT SOUNDS?

Thanks to advancement in Artificial Intelligence, researchers at Facebook have developed a set of algorithms which perform ‘facial verification’ (it recognizes that two images show that same face) and not the familiar ‘facial recognition’ (means putting a name to a face).

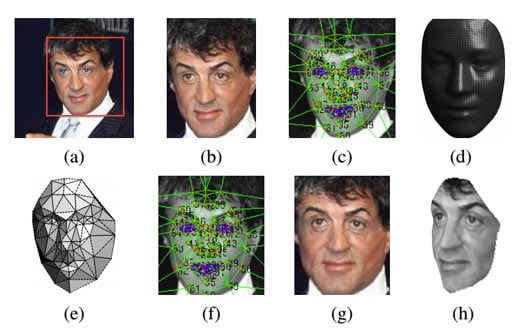

According to MIT’s Technology Review, DeepFace uses a 3D model for rotating faces virtually so that the person in the photo appears to be looking at the camera, creating a simulated neural network (involving over 120 million parameters) to work out a numerical description of the reoriented face to finally determine if there are enough similarities in two images.

One of the factors used in the algorithms includes calculating the distance between a user’s eyes and nose, which is why sometimes it may suggest the wrong people to tag because of similarities in facial structures. #AccidentlyFoundDoppelganger

Asked whether two unfamiliar photos of faces show the same person, a human being will get it right 97.53% of the time. DeepFace given the same task will do it correctly 97.25% of the time, that too regardless of variations in lightning or angle!

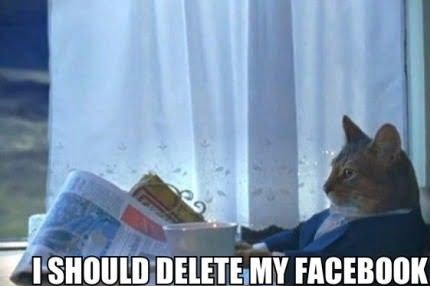

THE GOOD, THE BAD AND THE SCARY

If you read around on the internet enough, you will find out articles speculating about stores eventually using DeepFace on their security cameras to identify who a customer is, and based on their photos what type of clothes they like to wear, so that the store clerks can then point you in the direction of the item you’re most likely to buy.

The scary part about it is that by mapping the GPS location of various social posts and cross-referencing that information against a person’s online identity, in a matter of seconds you can learn more than you ever wanted to know about a stranger’s sepia-tinted dinner last night or how they looked first thing this morning! #NoFilter #YeahRight!

In fact it so easy that a Youtuber by the name of Jack Vale did exactly something like this by making use of location based searches to find social data to freak out unsuspecting strangers.

By – Raunaq Singh